Smart Drones: Edge AI & DRL Extend Flight Time, Sharpen Response

New research introduces a deep reinforcement learning framework that intelligently offloads drone processing to edge servers, significantly extending battery life while maintaining critical low-latency operations. This approach could transform how drones handle complex AI tasks in real-time.

TL;DR: This paper presents a Deep Reinforcement Learning (DRL) system that dynamically decides whether a drone should process data locally or offload it to a powerful edge server. The result? Drones can fly significantly longer and react faster by leveraging external compute, without sacrificing critical real-time responsiveness.

Flying Smarter, Not Just Harder

For anyone pushing the boundaries of drone capabilities – from autonomous navigation to real-time object detection – the constant struggle is balancing processing power, battery life, and the need for instant reactions. Traditional approaches force a compromise: either pack heavy, power-hungry compute onboard, or offload everything to the cloud and pray for low latency. This new work from Sourya Saha and Saptarshi Debroy offers a smarter path forward, one that could fundamentally change how our drones operate by making intelligent, adaptive decisions about where their processing happens.

The Onboard vs. Offboard Conundrum

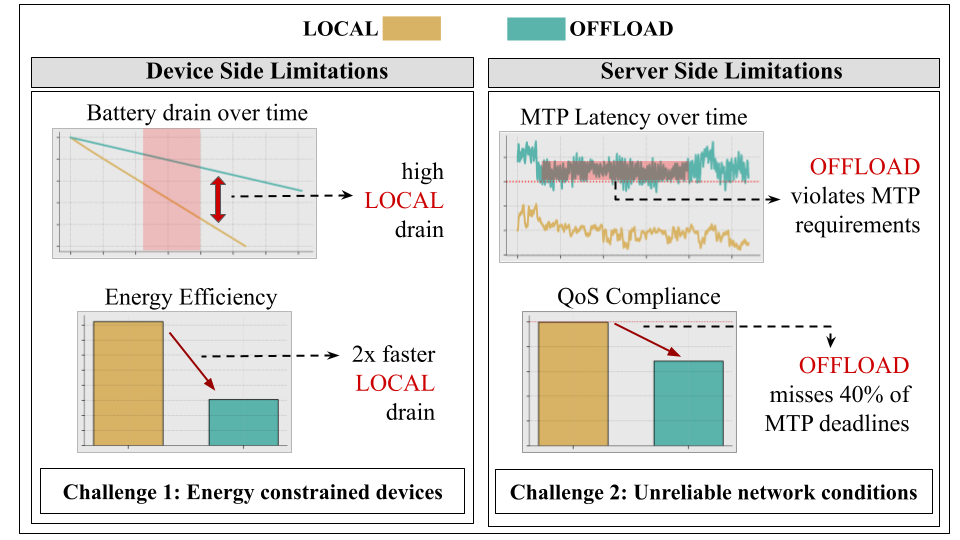

Autonomous drones demand a lot. They need to perceive their environment, map it, make decisions, and execute actions – all in milliseconds. Doing all of this on a compact, battery-powered device is a monumental engineering challenge. Local processing offers the lowest theoretical latency but drains batteries fast and limits payload capacity due to heavy hardware. Offloading to a remote server saves onboard power and weight, but introduces network latency, which can be unpredictable and fatal for real-time control. Current solutions often pick one extreme or use static rules, failing to adapt to changing network conditions, battery levels, or workload demands. This creates a non-trivial conflict between local and remote execution, as illustrated by the paper's authors:

Figure 1: The non-trivial conflict between Local vs Remote Offloading of XR workloads.

What's needed is a dynamic, intelligent system that can continuously weigh these trade-offs and make optimal decisions on the fly, ensuring a drone can handle complex perception and planning tasks without falling out of the sky or running out of juice prematurely.

The Brain Behind the Offloading: Deep Reinforcement Learning

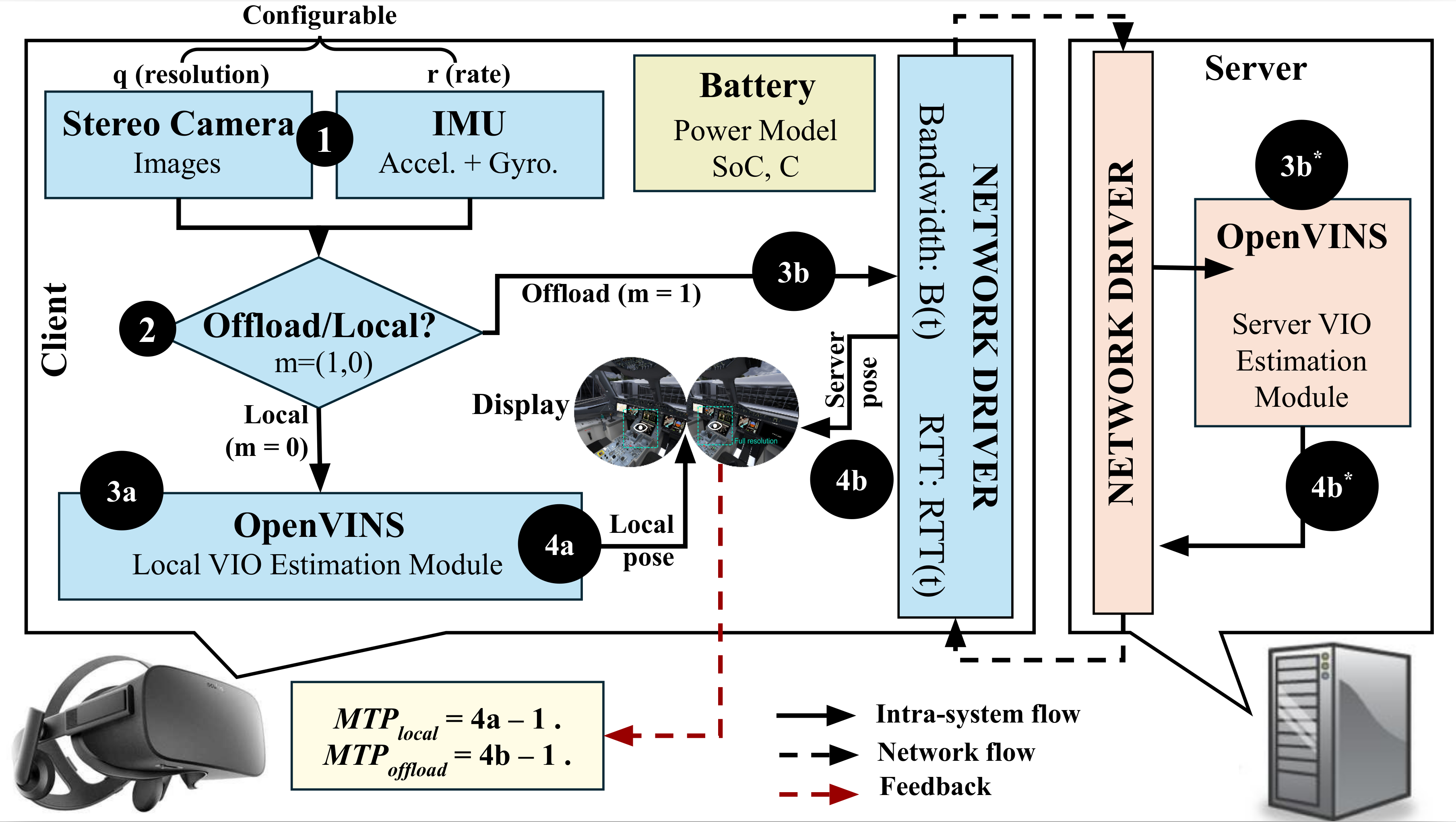

The core of this research is a battery-aware execution management framework powered by Deep Reinforcement Learning (DRL). Think of it as an intelligent agent constantly observing the drone's state and its environment, then making the best decision for processing. The system model is straightforward:

Figure 2: Overview of the edge-assisted interactive XR system model

Here’s how it works:

- Observation: The

DRLagent continuously monitors key metrics: the drone's current battery level, the real-time network conditions (bandwidth, latency), and the current motion-to-photon (MTP) latency compliance (how quickly user input translates to visual feedback, crucial for real-time control). - Decision Making: Based on these observations, the agent decides on three crucial parameters:

- Execution Placement: Should a specific workload segment be processed locally on the drone or offloaded to a nearby edge server?

- Workload Quality: What level of quality is acceptable for the processing? (e.g., lower resolution video processing to save bandwidth).

- IMU Rate: How frequently should inertial measurement unit data be sampled? (Higher rates consume more power and bandwidth but provide more precise motion data).

- Adaptation: The

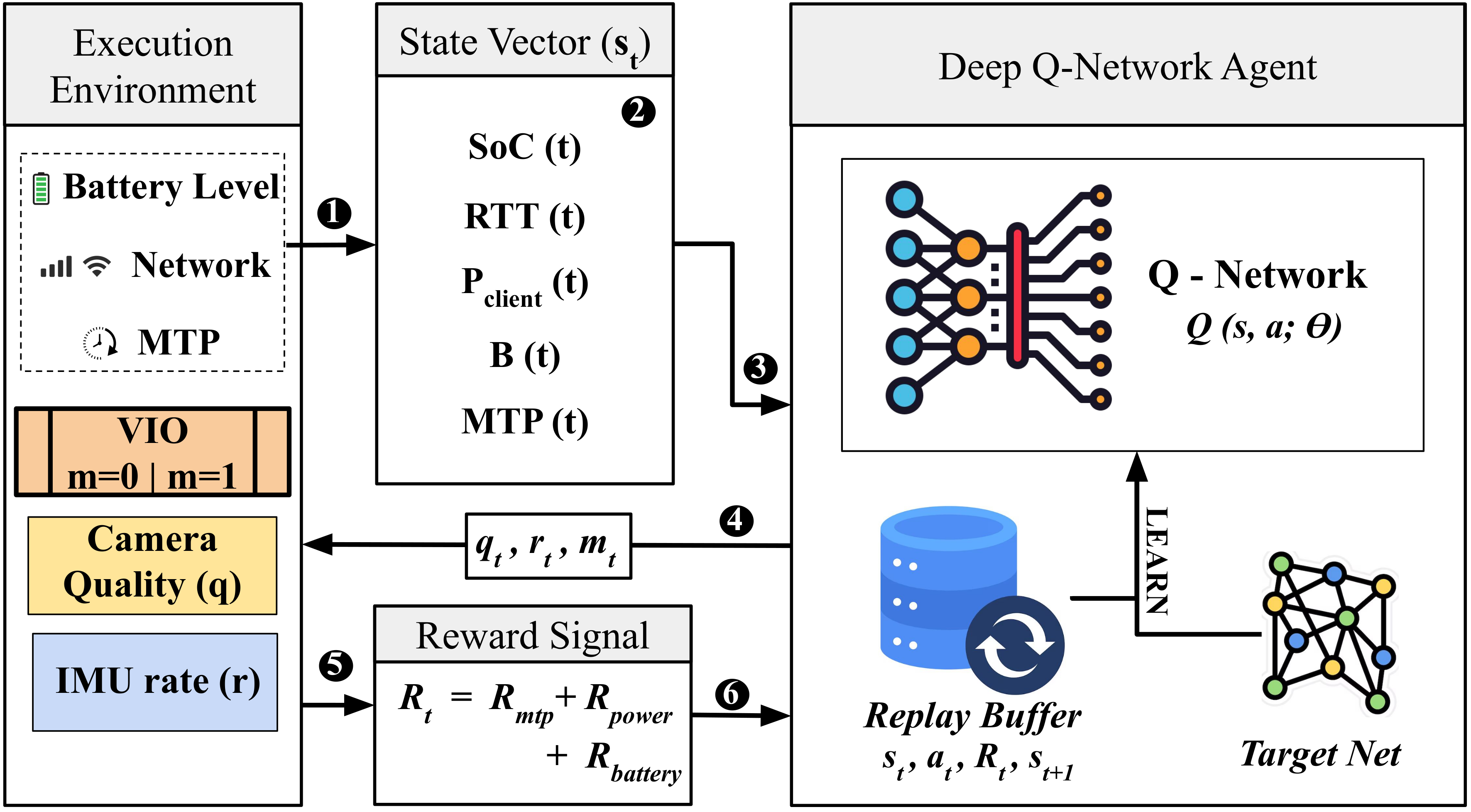

DRLpolicy is lightweight and designed for online adaptation. This means it learns and adjusts its strategy continuously as conditions change, maintaining high latency compliance even when the network fluctuates or the battery runs low. The control architecture visualizes this continuous feedback loop:

Figure 3: Reinforcement learning control architecture where the DQN agent continuously observes battery, network, and latency conditions to select optimal quality, IMU rate, and processing mode configurations that extend device lifetime under real-time constraints.

The DRL agent, specifically a Deep Q-Network (DQN), is trained to maximize battery lifetime while keeping latency below critical thresholds. It’s a sophisticated balancing act that prior, simpler heuristic-based methods struggle with.

The Numbers Don't Lie: More Flight Time, Solid Latency

The experimental results are compelling and directly address the core drone challenges:

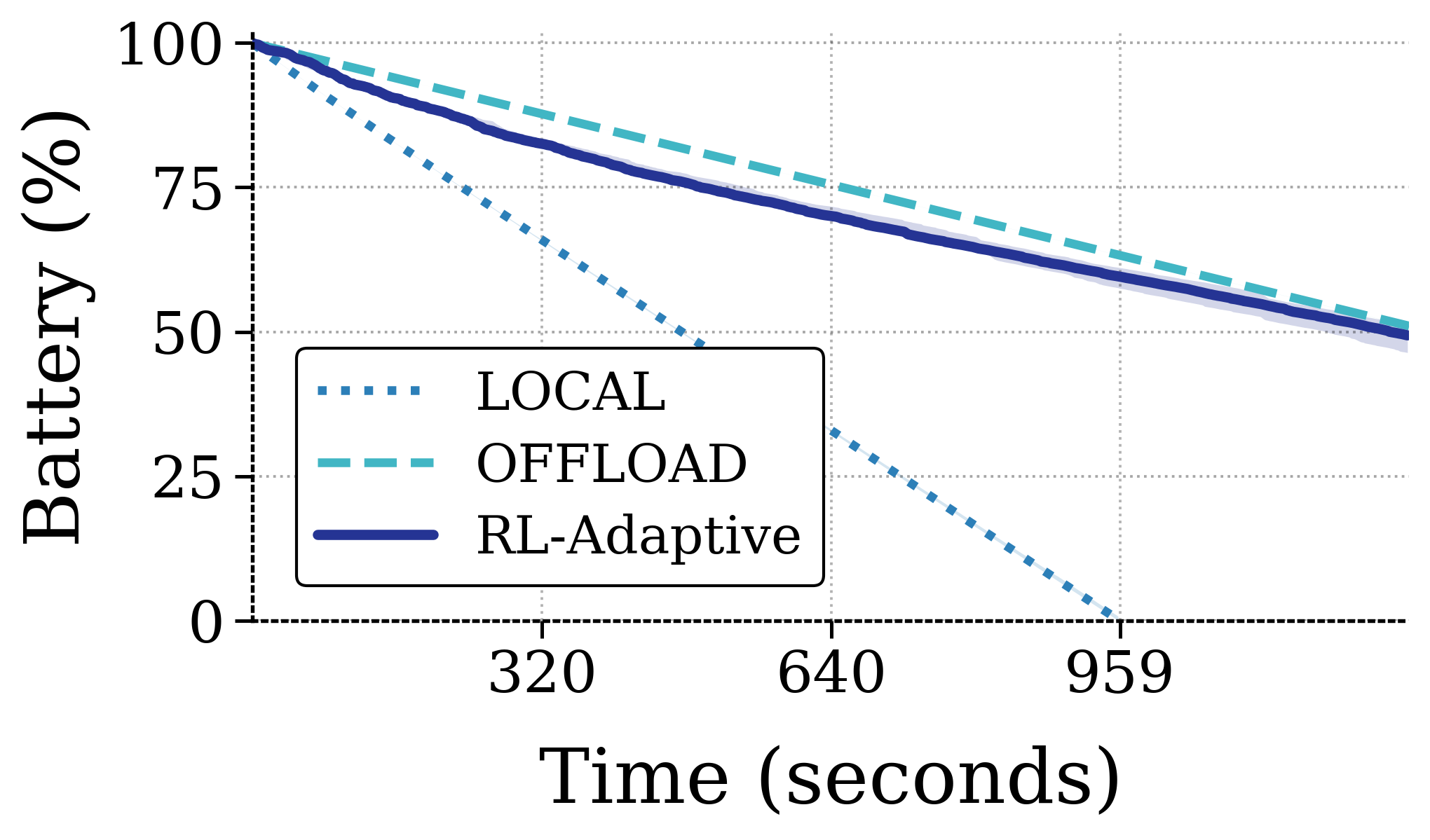

- Extended Battery Life: The proposed

DRLapproach extended projected device battery lifetime by up to 163% compared to a latency-optimal local execution strategy. That's a massive leap in operational endurance without adding physical battery weight. Figure 4(a) clearly shows theDRLstrategy (labeledDRL-A) significantly flattening the battery depletion curve over time.

Figure 4: (a) Battery depletion over time

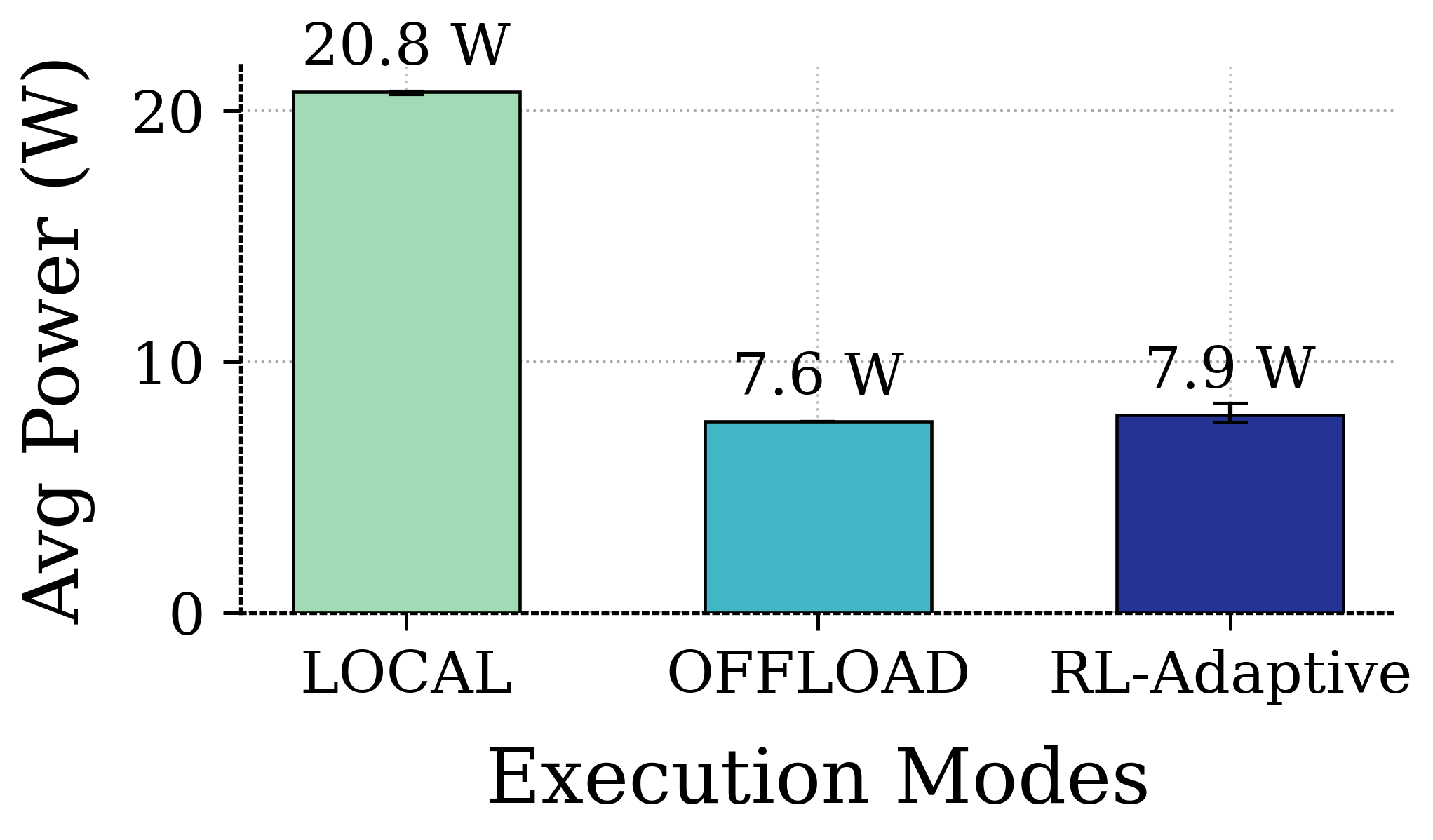

- Lower Power Consumption: This extension comes from a substantially lower average power consumption, as demonstrated in Figure 5(b).

Figure 5: (b) Average power consumption

- High Latency Compliance: Crucially, this power saving doesn't come at the cost of responsiveness. Under stable network conditions, the system maintained over 90% motion-to-photon latency compliance. Even under significantly limited network bandwidth availability, compliance did not fall below 80%, showcasing its robustness.

- Adaptive Performance: The

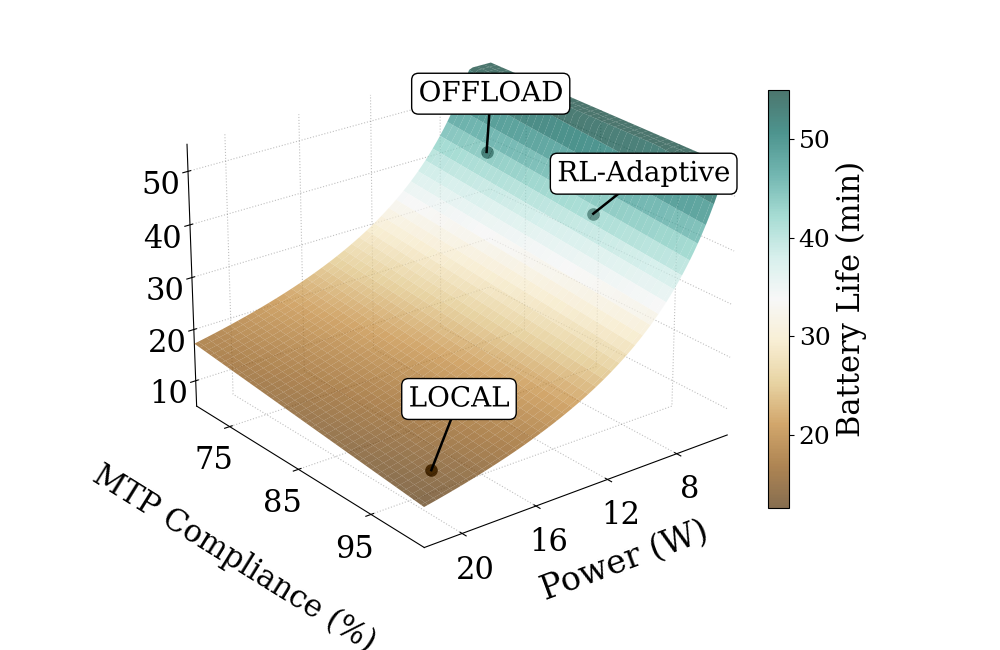

DRLagent actively manages the trade-off between power consumption and latency compliance, navigating the multi-objective Pareto surface, as shown in Figure 7(b), to find optimal operating points.

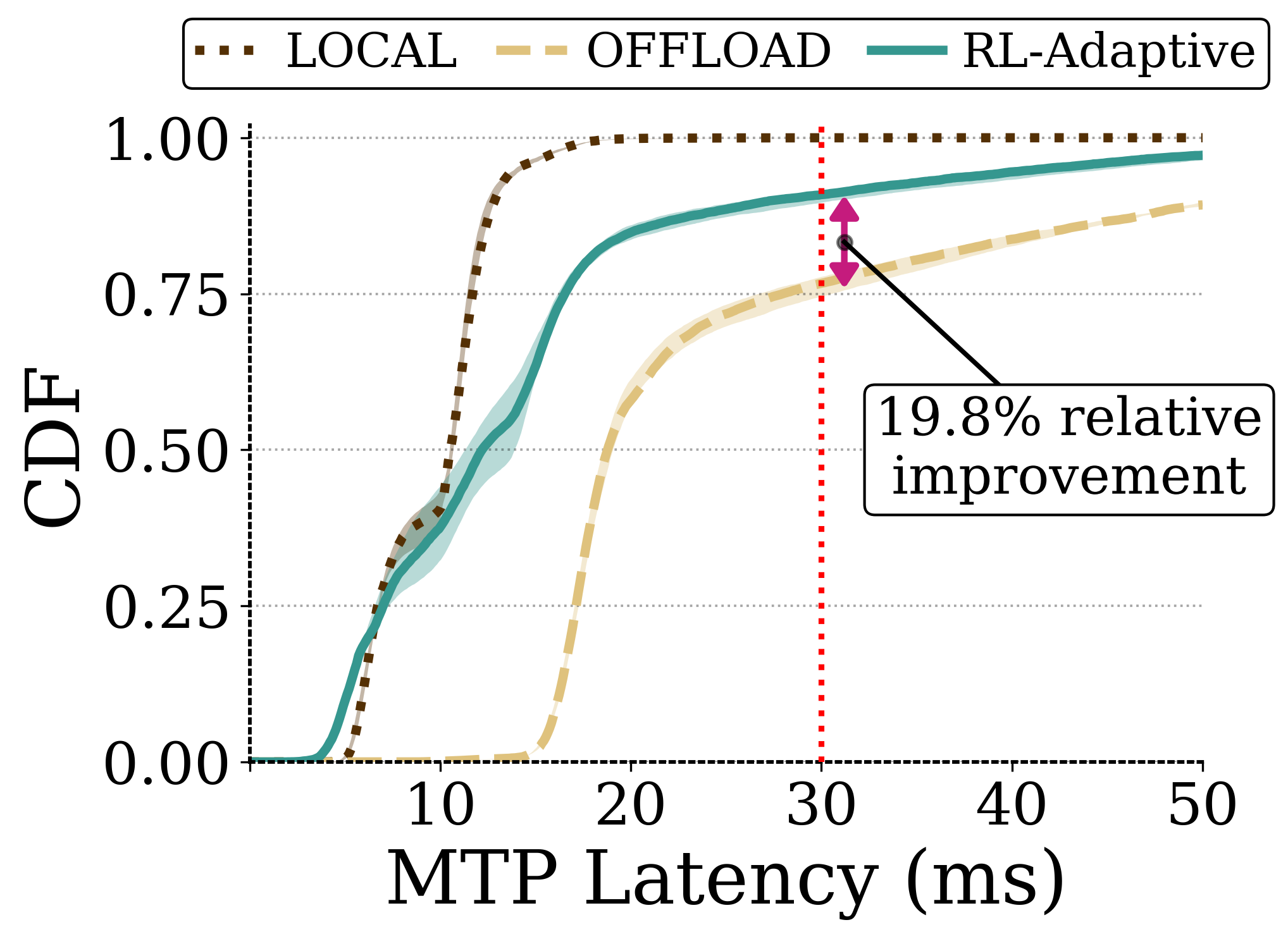

Figure 6: (a) CDF of MTP latency distribution under different execution strategies under normal network condition.

Figure 7: (b) Multi-objective trade-off between power consumption, MTP compliance, and battery life under stable network condition.

These results are not just marginal improvements; they represent a fundamental shift in how we can approach drone computation.

Why This Matters for Drones: Real-World Capabilities

Consider a drone performing complex tasks like autonomous inspection, environmental monitoring, or search and rescue. Currently, these roles often require either very expensive, heavy drones with powerful onboard CPUs/GPUs, or they're limited by battery life and the processing they can do. With intelligent edge offloading, the game changes:

- Enhanced Perception: Drones could run far more sophisticated computer vision models. For instance, detailed 3D scene reconstruction, like the work in "MessyKitchens: Contact-rich object-level 3D scene reconstruction," could be performed in real-time. A drone could build precise, object-aware maps of a factory floor or a disaster site, offloading the heavy

NeRForSLAMcomputations to an edge server without missing a beat. - Superior Visuals & Analytics: High-quality video processing is often computationally intense. Technologies like "SparkVSR: Interactive Video Super-Resolution via Sparse Keyframe Propagation" could be deployed on drones, delivering crystal-clear imagery for inspection or surveillance. The heavy super-resolution algorithms could run on the edge, leaving the drone free to focus on flight and data capture.

- Advanced Autonomy & Planning: The next frontier for drones involves more intelligent, human-like reasoning. Papers such as "DreamPlan: Efficient Reinforcement Fine-Tuning of Vision-Language Planners via Video World Models" explore using large Vision-Language Models (

VLMs) for robotic planning. These models are enormous. Offloading their 'brain' to an edge server would allow even small drones to execute complex, high-level directives, transforming their operational capabilities from simple flight paths to nuanced, adaptive task execution.

This DRL framework makes these advanced AI-driven applications viable for smaller, lighter, and longer-flying drones, unlocking new commercial and research possibilities.

The Road Ahead: Limitations and Unanswered Questions

While promising, this research, like all good science, has its boundaries. It's important to be direct about what it doesn't solve yet:

- Edge Server Dependency: The effectiveness hinges on the availability and reliability of a nearby edge server. In remote areas without

5Gor robustWi-Fi 6Einfrastructure, the benefits diminish or disappear entirely. The paper acknowledges this by testing under limited bandwidth, but a complete absence of connectivity is a different problem. - Communication Overhead: While offloading reduces onboard processing power, the energy cost of wireless communication itself can be significant. The system optimizes the trade-off, but it doesn't eliminate this energy drain.

- Workload Specificity: The experiments used an

XRworkload. While the principles are transferable, adapting theDRLagent and its reward functions for highly specialized drone perception or control workloads (e.g., precise manipulation with a robotic arm) would require further research and training. - Security and Privacy: Offloading sensitive drone data to an external server introduces new security and privacy considerations that are not addressed in this paper. For critical applications, this would need robust encryption and secure communication protocols.

- Scalability for Swarms: The paper focuses on a single device. Scaling this adaptive offloading to a swarm of drones coordinating their tasks and competing for edge server resources is a complex challenge that would require distributed

DRLand resource management.

DIY Feasibility: A Challenging but Rewarding Endeavor

Can a dedicated hobbyist or small team replicate this? The core components are accessible, but implementing the DRL framework from scratch would be a substantial project. You'd need:

- Hardware: A drone platform capable of robust wireless communication (

Wi-Fi 6Eor5Gmodule). An edge server, which could be a powerfulNVIDIA Jetson AGXor a small form-factorPCwith a dedicatedGPU, would be essential for handling the offloadedAItasks. - Software: Open-source

DRLlibraries likePyTorch,TensorFlow, orRLlibprovide the foundation. You'd need to define your drone's state space (battery, network, workload), action space (offload, quality,IMUrate), and reward function (maximize battery, minimize latency violations). - Expertise: A solid understanding of machine learning, especially reinforcement learning, network protocols, and embedded systems programming, would be necessary. This isn't a plug-and-play solution, but the foundational research and tools are out there for those willing to dive deep.

This research points to a future where drones aren't just flying cameras or simple automatons, but intelligent, adaptive systems that dynamically leverage the distributed computational power of edge networks. The question now isn't if drones will get smarter, but how quickly we can deploy these advanced capabilities into the real world.

Paper Details

Title: Deep Reinforcement Learning-driven Edge Offloading for Latency-constrained XR pipelines Authors: Sourya Saha, Saptarshi Debroy Published: N/A arXiv: 2603.16823 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.