AdaRadar: All-Weather Drone Vision Without the Data Bloat

A new technique, AdaRadar, dramatically compresses radar data by over 100x with minimal performance loss, enabling robust all-weather perception for drones on low-bandwidth links.

TL;DR: AdaRadar introduces an adaptive radar data compression scheme that cuts data size by over 100 times while maintaining critical perception accuracy. It dynamically adjusts compression based on task performance, allowing drones to leverage high-resolution radar without saturating their communication links.

Beyond the Haze: Radar Vision for Drones

Modern drones are increasingly sophisticated, capable of complex maneuvers and tasks. However, their 'eyes' – primarily cameras – often fail them when conditions turn challenging. Heavy fog, torrential rain, or even just the darkness of night can render traditional optical sensors useless, grounding missions or leading to dangerous situations. This is a critical limitation for autonomous systems designed to operate reliably in the real world. While cameras struggle, radar offers a robust alternative, providing direct measurements of range and velocity for objects, regardless of visibility. The catch? Raw radar data is a notorious bandwidth hog, often too massive to efficiently transmit from the sensor to the onboard processing unit, especially on smaller drones with their inherently limited compute and communication budgets. This is precisely where AdaRadar steps in, offering a clever and efficient solution to equip drones with superior all-weather vision without overwhelming them with massive data streams.

The Bandwidth Bottleneck: Why Current Radar Fails Drones

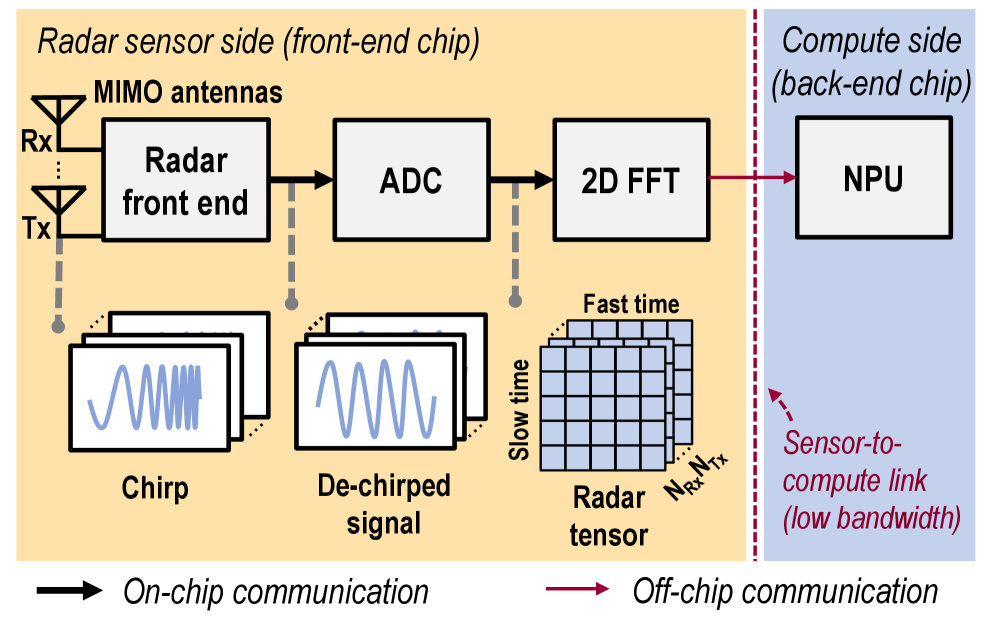

Today's advanced drone perception systems typically fuse data from multiple sensors: high-resolution cameras for rich visual context, LiDAR for precise 3D mapping, and occasionally low-resolution radar for basic obstacle avoidance. Each has its strengths and, crucially, its weaknesses. Cameras, while providing unparalleled detail in good light, become virtually useless in adverse weather conditions like heavy rain, dense fog, or complete darkness. LiDAR, a fantastic tool for generating detailed 3D point clouds, comes with its own set of drawbacks: it's often expensive, adds significant weight to a drone, and can struggle with heavy precipitation or dust. Radar, however, stands out as the true all-weather champion. It effortlessly penetrates fog, rain, and darkness, delivering crucial range and Doppler velocity information about objects in the environment. The challenge arises with high-resolution radar. While essential for detailed perception tasks like precise object detection and tracking, it generates a tremendous volume of data – often referred to as a 'raw radar tensor' (Figure 3). This data deluge quickly saturates the low-bandwidth communication links typically found between a drone's radar sensor and its main computing engine (e.g., an NPU). Traditional compression methods, often borrowed from image processing, are ill-suited for this task. They're usually fixed-ratio, meaning they can't adapt to changing environmental conditions or intelligently prioritize critical information. This leads to a dilemma: either too much data loss, compromising perception accuracy, or insufficient compression, still overwhelming the communication channel. What's desperately needed is a system that can intelligently compress radar data, preserving only the most critical information while drastically reducing its overall footprint.

AdaRadar's Secret Sauce: Adaptive Compression on the Fly

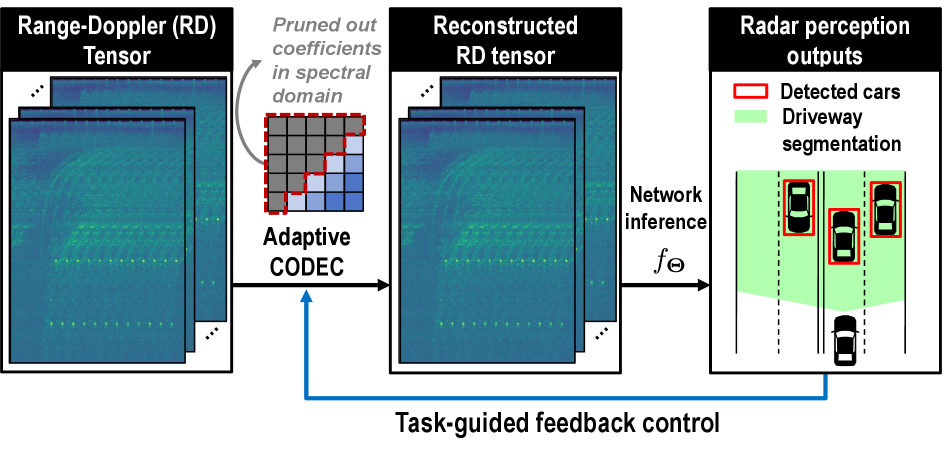

AdaRadar tackles this head-on with an intelligent, adaptive compression framework. The core idea is to dynamically adjust the compression ratio based on the performance of the downstream perception task – think object detection or segmentation. Instead of a fixed compression rate, AdaRadar uses a feedback loop (Figure 1). This loop continuously evaluates how well the compressed data is performing and fine-tunes the compression to maintain accuracy while minimizing data throughput.

Figure 1: Adaptive codec with task-guided feedback control. We propose an adaptive codec that compresses high-dimensional range-Doppler data by pruning spectral domain coefficients. A feedback loop adaptively regulates the compression ratio, guided by the performance of downstream perception tasks.

Figure 1: Adaptive codec with task-guided feedback control. We propose an adaptive codec that compresses high-dimensional range-Doppler data by pruning spectral domain coefficients. A feedback loop adaptively regulates the compression ratio, guided by the performance of downstream perception tasks.

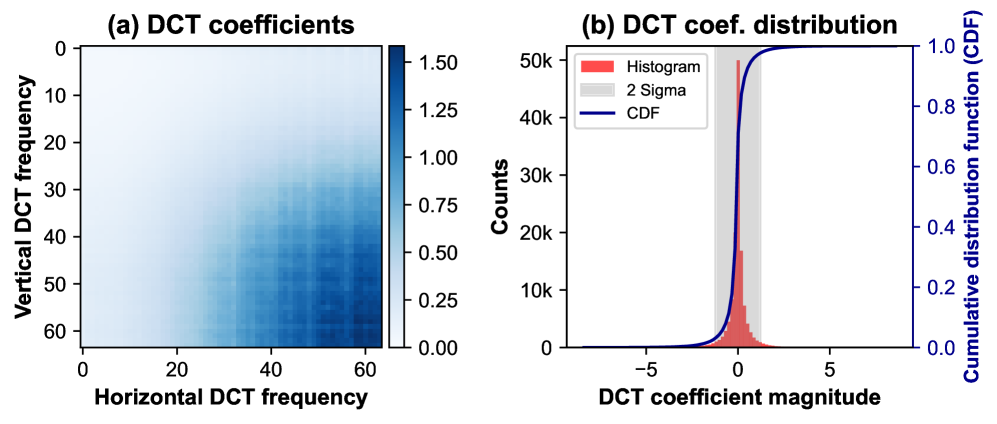

The process starts with the raw radar data being transformed into a range-Doppler cube (Figure 3). This cube is then passed through a Discrete Cosine Transform (DCT) to shift the data into the frequency domain. The authors found that radar feature maps are heavily concentrated on a few frequency components (Figure 4a), making them highly compressible.

Figure 3: Radar-based perception system overview. An FMCW radar transmits linearly swept‑frequency chirps. The incoming echo is mixed with a copy of the transmitted chirp at the receiver, yielding a de‑chirped intermediate‑frequency signal that the ADC digitizes to form a raw radar tensor. Successive FFTs along the fast‑time and slow‑time axes convert this tensor into a range–Doppler cube. The large, raw radar tensor is transferred to the NPU over the power-hungry sensor-to-compute link for network inference.

Figure 3: Radar-based perception system overview. An FMCW radar transmits linearly swept‑frequency chirps. The incoming echo is mixed with a copy of the transmitted chirp at the receiver, yielding a de‑chirped intermediate‑frequency signal that the ADC digitizes to form a raw radar tensor. Successive FFTs along the fast‑time and slow‑time axes convert this tensor into a range–Doppler cube. The large, raw radar tensor is transferred to the NPU over the power-hungry sensor-to-compute link for network inference.

Figure 4: Motivation for spectral pruning and quantization. (a) The DCT coefficient magnitudes are clustered in the high-frequency bins. (b) Their histogram is sharply peaked, highlighting strong sparsity and clear opportunities for compression.

Figure 4: Motivation for spectral pruning and quantization. (a) The DCT coefficient magnitudes are clustered in the high-frequency bins. (b) Their histogram is sharply peaked, highlighting strong sparsity and clear opportunities for compression.

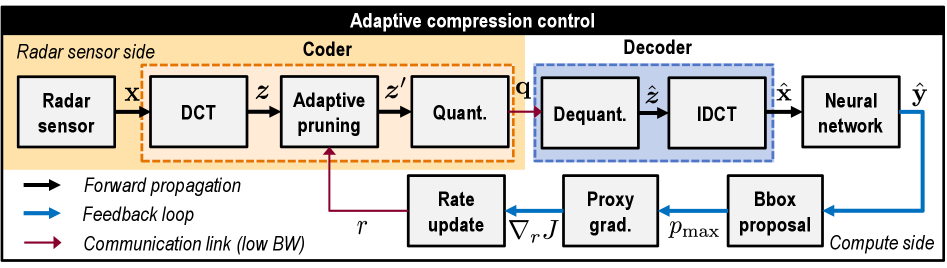

In this spectral domain, AdaRadar performs 'adaptive spectral pruning,' selectively discarding less important frequency coefficients. This is coupled with 'scaled quantization' to preserve the dynamic range of the remaining data patches. The real genius lies in the adaptive feedback: the compute engine, after performing its perception task (like object detection), sends back a 'proxy gradient' of detection confidence with respect to the compression rate. This gradient is approximated using a zeroth-order method, meaning it doesn't need to transmit large, bandwidth-intensive gradient tensors back to the sensor side. This allows the sensor to dynamically adjust its pruning rate (Figure 2) in real-time, ensuring optimal compression without sacrificing critical object detection performance.

Figure 2: AdaRadar: Online rate-adaptive radar compression framework. Our proposed method introduces a feedback loop in which the proxy gradient is computed from the detection outputs to update the compression ratio adaptively. This avoids the need for backpropagation through the communication channel. The radar tensor is compressed using DCT, adaptive spectral pruning, and scaled quantization, then transmitted to the compute side. In an object-detection setting, the neural network produces detection results from decompressed radar data cubes. The loop uses proposed bounding boxes to estimate the proxy gradient, thereby updating the pruning rate.

Figure 2: AdaRadar: Online rate-adaptive radar compression framework. Our proposed method introduces a feedback loop in which the proxy gradient is computed from the detection outputs to update the compression ratio adaptively. This avoids the need for backpropagation through the communication channel. The radar tensor is compressed using DCT, adaptive spectral pruning, and scaled quantization, then transmitted to the compute side. In an object-detection setting, the neural network produces detection results from decompressed radar data cubes. The loop uses proposed bounding boxes to estimate the proxy gradient, thereby updating the pruning rate.

Real-World Gains: 100x Data Cut, Minimal Performance Hit

The numbers speak for themselves. AdaRadar achieves impressive compression rates with minimal impact on perception accuracy.

- Over 100x feature size reduction: This is a massive win for bandwidth-constrained drone systems.

- Minimal performance drop (~1%p): Across various metrics like Average Precision (AP) and Average Recall (AR), AdaRadar maintains performance very close to uncompressed data.

- Resiliency to error: Compared to simpler index-value-based compression, AdaRadar's spectral pruning approach shows much greater resilience to errors (Figure 10).

- Validated on multiple datasets: The system was tested on

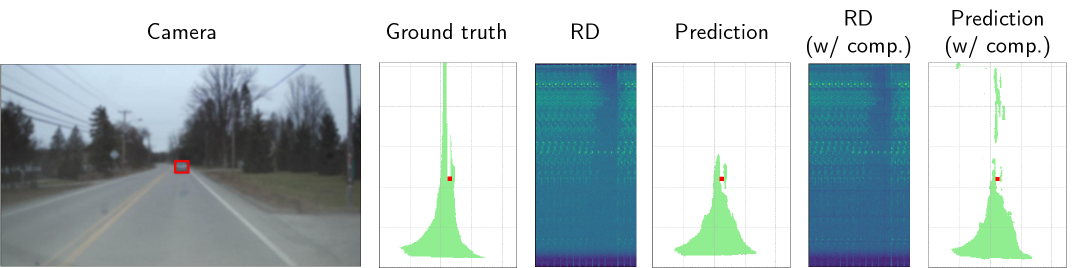

RADIal,CARRADA, andRadatrondatasets, demonstrating its robustness across different radar scenarios. - Qualitative Improvements: In some instances, the compressed range-Doppler output even 'outperforms the uncompressed baseline' (Figure 9), likely due to the compression acting as a noise filter.

Figure 9: Qualitative comparisons. Networks are trained on raw radar tensors, visualized as range-Doppler (RD) images, along with ground-truth labels for freeway segmentation and vehicle detection. Camera images only provide contextual reference for the scene. RD images map Range to the yy-axis and Doppler to the xx-axis. We highlight the detected car in red and the segmented map in green in the bird’s-eye-view visualization. Notably, the compressed RD outperforms the uncompressed baseline.

Figure 9: Qualitative comparisons. Networks are trained on raw radar tensors, visualized as range-Doppler (RD) images, along with ground-truth labels for freeway segmentation and vehicle detection. Camera images only provide contextual reference for the scene. RD images map Range to the yy-axis and Doppler to the xx-axis. We highlight the detected car in red and the segmented map in green in the bird’s-eye-view visualization. Notably, the compressed RD outperforms the uncompressed baseline.

Unlocking All-Weather Autonomy for Your Next Drone Build

This isn't just an academic exercise; AdaRadar has profound implications for drone technology. For hobbyists building custom FPV or autonomous drones, this means potentially integrating advanced radar sensors without needing a supercomputer onboard or a fat data link. Consider the possibilities: a delivery drone navigating dense urban fog, a search and rescue UAV locating targets in heavy rain, or an inspection drone operating flawlessly at night—all relying on robust, high-resolution radar data that doesn't overwhelm its limited processing power or communication channels. This technology makes advanced, all-weather autonomy more accessible and efficient for smaller, lighter drone platforms. It allows for longer flight times by reducing power consumption on communication and compute, and opens up new mission profiles where visual line-of-sight is impossible.

The Road Ahead: Where AdaRadar Still Needs to Soar

While AdaRadar is a significant leap, it's important to consider its current scope and what's still needed for widespread drone deployment.

- Computational Overhead: Although it reduces data transmission, the compression and decompression steps, along with the feedback loop, still require some computational resources on both the sensor and compute sides. For extremely resource-constrained micro-drones, even this might be a factor.

- Real-time Latency: The adaptive feedback loop, while efficient, introduces a slight delay as detection results are analyzed to adjust compression. For ultra-low latency applications, this feedback cycle needs to be incredibly fast.

- Generalization to Diverse Tasks: The paper primarily focuses on object detection. While the core compression mechanism is general, the 'proxy gradient' feedback relies on specific task performance metrics. Adapting this gracefully to a wider range of perception tasks (e.g., precise navigation, SLAM, swarm coordination) might require further research into task-agnostic feedback or more complex gradient approximations.

- Hardware Implementation: The paper is primarily algorithmic. Real-world deployment would require efficient hardware implementations of the

DCT, pruning, and quantization on the sensor side, potentially involving customFPGAorASICdesigns for optimal performance and power efficiency at the edge.

Can You Build It? AdaRadar for the Homebrew Drone

For the average drone hobbyist, replicating AdaRadar's full adaptive feedback system from scratch would be a significant undertaking. The core components like Discrete Cosine Transform (DCT) and quantization are standard signal processing techniques available in libraries like SciPy or OpenCV. However, the adaptive feedback loop, which involves a deep learning model for perception and a custom zeroth-order gradient approximation, requires a strong background in machine learning and embedded programming. The paper doesn't mention any open-source code releases, which is common for new research. Building a proof-of-concept would likely involve a development board with an NPU (like an NVIDIA Jetson or Google Coral) and a compatible FMCW radar sensor. While the principles are sound, the implementation requires substantial engineering effort, placing it more in the realm of advanced builders or small research teams rather than casual DIY.

Broader Horizons: How AdaRadar Fits into the Edge AI Puzzle

AdaRadar’s work on efficient radar perception fits perfectly into the broader movement toward smarter, more capable edge AI. For example, related efforts like "Unified Spatio-Temporal Token Scoring for Efficient Video VLMs" by Zhang et al. tackle similar efficiency challenges but for video data, showing that token pruning can drastically improve computational efficiency for complex vision-language models on videos. This highlights a universal need for intelligent data reduction across different sensor modalities. Similarly, the advancements in "Loc3R-VLM: Language-based Localization and 3D Reasoning with Vision-Language Models" by Qu et al. demonstrate the sophisticated tasks drones could perform if they had access to robust, all-weather perception data, regardless of its source. AdaRadar helps provide that robust input. And finally, "Feeling the Space: Egomotion-Aware Video Representation for Efficient and Accurate 3D Scene Understanding" by Shi and Shin points to the importance of efficient visual processing for a drone's spatial understanding, complementing AdaRadar's focus on radar data. Together, these papers push the boundaries of how much intelligence we can pack into a drone, making them more aware and autonomous in their environments.

The Future of Flight is Clear

AdaRadar moves us closer to a future where drones aren't limited by bad weather or poor visibility, promising a new era of robust, always-on perception for autonomous flight.

Paper Details

Title: AdaRadar: Rate Adaptive Spectral Compression for Radar-based Perception Authors: Jinho Park, Se Young Chun, Mingoo Seok Published: March 2026 arXiv: 2603.17979 | PDF

ORIGINAL PAPER: AdaRadar: Rate Adaptive Spectral Compression for Radar-based Perception (https://arxiv.org/abs/2603.17979) RELATED PAPERS: Unified Spatio-Temporal Token Scoring for Efficient Video VLMs, Loc3R-VLM: Language-based Localization and 3D Reasoning with Vision-Language Models, Feeling the Space: Egomotion-Aware Video Representation for Efficient and Accurate 3D Scene Understanding FIGURES AVAILABLE: 10

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.