Foreground-Guided Tuning: Enhancing Drone Vision with Precision

FVG-PT improves vision-language models by focusing on foreground elements, enabling smarter drone navigation in complex environments.

TL;DR: Foreground View-Guided Prompt Tuning (FVG-PT) refines vision-language models by emphasizing critical visual features. This advancement could significantly enhance drone navigation in cluttered and complex spaces.

Refining Drone Vision: A Targeted Approach

Recent research has introduced Foreground View-Guided Prompt Tuning (FVG-PT), a method designed to improve vision-language models (VLMs) by sharpening their focus on the foreground—objects and features that are most relevant in a scene. By reducing distractions caused by irrelevant background elements, this approach could transform how drones navigate challenging environments, from crowded warehouses to dense forests.

Addressing Limitations in Current Models

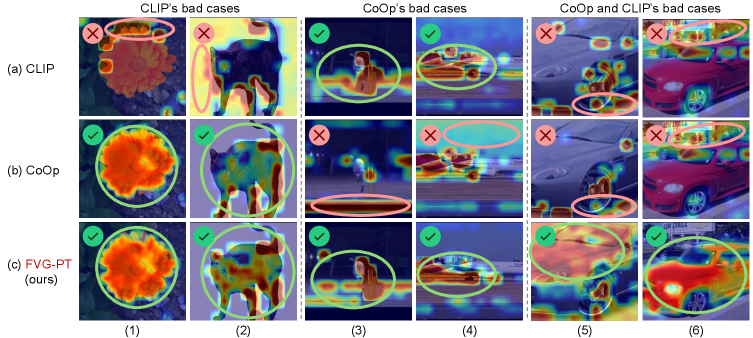

Vision-language models like CLIP have made strides in image recognition but often struggle with noisy attention maps. These maps can misdirect the model’s focus to unimportant elements such as shadows, clutter, or reflections, which is particularly problematic for drones tasked with precision navigation or obstacle avoidance. Existing tuning methods, such as CoOp, fail to consistently resolve these issues, leaving room for improvement in how models prioritize visual information.

FVG-PT tackles this challenge by ensuring models reliably focus on the foreground, enhancing their ability to identify critical objects and features while maintaining situational awareness.

How FVG-PT Works

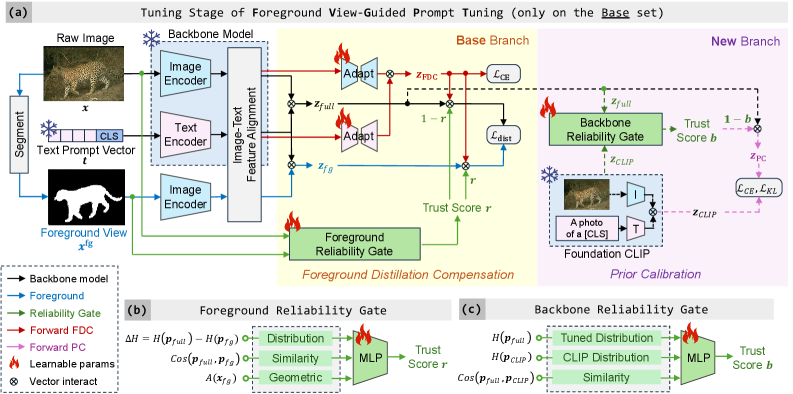

FVG-PT integrates seamlessly with existing VLMs, addressing foreground attention issues during the tuning process. Its architecture includes three key components:

- Foreground Reliability Gate (FRG): This module evaluates the quality of the foreground view and assigns reliability scores, guiding the model to prioritize relevant visual elements.

- Foreground Distillation Compensation (FDC): By adding adapters after image-text alignment, this component trains the model to focus on the foreground while minimizing distractions from the background.

- Prior Calibration Module: To avoid overemphasis on the foreground, this module introduces a balancing mechanism, ensuring the model retains useful contextual information from the background.

Figure: FVG-PT (right) enhances focus on foreground objects compared to baseline methods (left and center).

Figure: The FVG-PT system, showing how its components refine visual attention.

Performance Metrics: A Clear Advantage

FVG-PT delivers measurable improvements across diverse datasets and scenarios:

- Caltech101 Dataset: Achieved up to a 7.6% increase in classification accuracy compared to the baseline

CLIPmodel. - ImageNet Variations: Demonstrated robust performance in cluttered and noisy environments, maintaining high accuracy.

- Compatibility: Works with multiple backbone models, showcasing its adaptability as an add-on module.

These results highlight FVG-PT’s potential to enhance drone vision systems, making them more reliable and effective in real-world applications.

Practical Applications for Drone Technology

FVG-PT’s ability to refine visual focus has significant implications for autonomous drones:

- Indoor Navigation: Enables drones to maneuver through complex spaces like warehouses or factories by focusing on key objects and avoiding obstacles.

- Search and Rescue: Helps drones identify people, vehicles, or other critical targets in challenging outdoor environments.

- Delivery Systems: Enhances precision for drones navigating suburban areas filled with distractions like trees, cars, and power lines.

Implementation Challenges and Opportunities

While FVG-PT is modular and adaptable, it requires a solid understanding of machine learning and access to resources like pre-trained VLMs (CLIP) and GPUs for training. The authors have open-sourced the code on GitHub, making it accessible to researchers and developers familiar with Python and PyTorch frameworks.

For hardware, drones equipped with onboard GPUs (e.g., Nvidia Jetson boards) could potentially run VLMs integrated with FVG-PT, though optimization would be necessary for real-time applications. As the technology evolves, it may become more accessible to hobbyists and smaller-scale developers.

Related Innovations in Drone Vision

FVG-PT is part of a broader effort to enhance vision-language models for autonomous systems. For instance, FOMO-3D focuses on long-tailed 3D object detection, addressing challenges in environments with rare or small obstacles. Similarly, UNBOX aims to improve the interpretability of visual models, a critical factor for building trust in AI-driven drones.

Looking Ahead

As drones continue to advance, innovations like FVG-PT will play a crucial role in making them smarter and more reliable. By refining how models process visual information, this technology paves the way for drones to operate effectively in complex, real-world scenarios. The future of autonomous drone vision is taking shape, one fine-tuned prompt at a time.

Paper Details

Title: FVG-PT: Adaptive Foreground View-Guided Prompt Tuning for Vision-Language Models

Authors: Haoyang Li, Liang Wang, Siyu Zhou, Jiacheng Sun, Jing Jiang, Chao Wang, Guodong Long, Yan Peng

Published: N/A

arXiv: 2603.08708 | PDF

Related Papers

Written by

The Flight DeskSharing knowledge about drones and aerial technology.

More from Mini Drone Shop

Stop Wandering: Metacognitive AI Makes Drones Smarter, Not Just Faster

Unmasking the Invisible: Polarization Powers Drone Camouflage Detection

Smarter Drone Comms: AI-Powered Beams Cut Through the Noise