OmniStream: A Unified AI Core for Real-time Drone Autonomy

OmniStream unifies diverse AI capabilities like 2D/3D perception, reconstruction, and decision-making into a single, real-time streaming visual backbone, promising more intelligent and autonomous drones.

TL;DR: OmniStream is a new visual AI backbone that combines previously separate functions – perceiving the world, building 3D maps, and making decisions – into one unified system. It processes video streams frame-by-frame, designed for real-time operation on systems like autonomous drones.

Unifying the Drone's Senses

Current autonomous systems, especially drones, are often cobbled together from multiple specialized AI models. One separate model handles object detection, another builds a 3D map, and a third tries to reason about actions. Such fragmentation leads to significant complexity in system design, higher resource consumption due to redundant processing, and often, less robust and slower performance than an integrated system could offer. OmniStream aims to fundamentally change this by proposing a single "brain" that can handle these diverse tasks—perception, reconstruction, and action—simultaneously and in real-time, moving us closer to truly intelligent drone autonomy.

The Fragmented Brain Problem

The core issue is that modern visual AI agents need to be general, causal, and physically structured to operate effectively in dynamic, real-time streaming environments. However, today's vision foundation models remain largely fragmented. They specialize narrowly: some excel at image semantic perception (identifying what's in a static image), others at offline temporal modeling (understanding events and motion over pre-recorded video), and still others at spatial geometry (generating 3D reconstructions from single or multiple views). Such a siloed approach means that integrating these disparate capabilities into a single, efficient system suitable for a drone becomes a significant engineering challenge. It often forces compromises on critical drone constraints like weight, power consumption, and onboard computational resources. More importantly, it can result in slower, less coherent decision-making and a lack of true general-purpose understanding compared to what a holistically designed system could achieve. Drones need a unified understanding of their environment, not just a collection of separate insights.

How One Model Sees, Maps, and Thinks

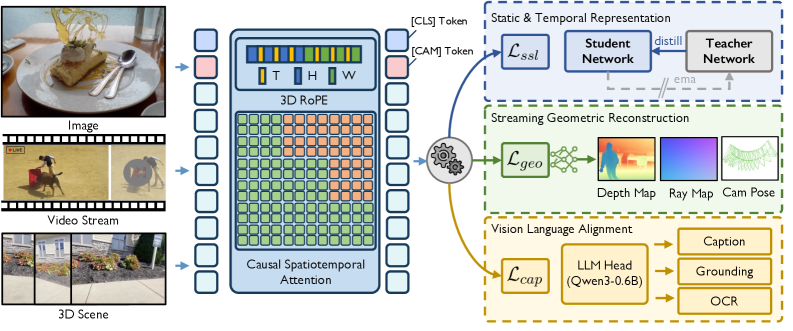

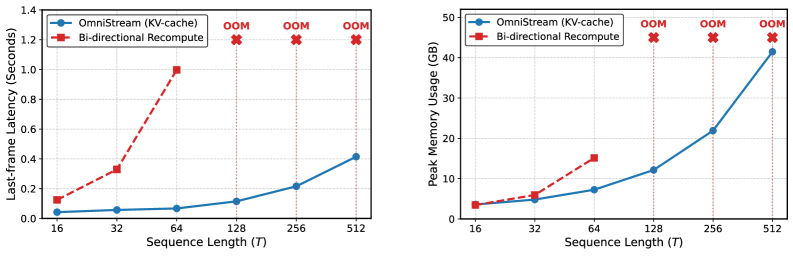

OmniStream directly tackles this fragmentation by introducing a unified streaming visual backbone. The underlying architecture leverages two critical innovations: causal spatiotemporal attention and 3D rotary positional embeddings (3D-RoPE). Causal spatiotemporal attention is key for real-time operation; it allows the model to process video streams efficiently, frame by frame, by only attending to past frames and the current one, preventing future information leakage and ensuring low-latency inference. The 3D-RoPE mechanism, on the other hand, is crucial for integrating spatial information directly into the model's understanding. With 3D-RoPE, the model inherently comprehends its observations in a 3D context, rather than merely processing flat 2D images and inferring depth as an afterthought. Together, these innovations enable the model to maintain a persistent KV-cache, effectively allowing it to "remember" what it has seen and understood in previous frames without needing to re-process everything. This persistent memory dramatically improves computational efficiency over long video sequences, making it suitable for continuous streaming applications.

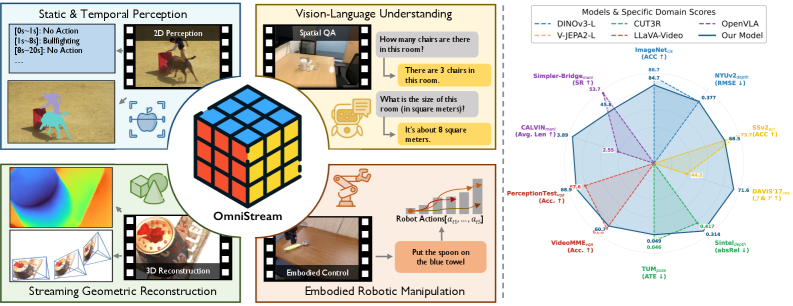

Figure 1: OmniStream supports a wide spectrum of tasks, including 2D/3D perception, vision-language understanding, and embodied robotic manipulation. The frozen features of its single backbone achieve highly competitive or superior performance compared to leading domain-specific experts.

Figure 1: OmniStream supports a wide spectrum of tasks, including 2D/3D perception, vision-language understanding, and embodied robotic manipulation. The frozen features of its single backbone achieve highly competitive or superior performance compared to leading domain-specific experts.

Beyond its architectural design, the model's versatility stems from its comprehensive training strategy. The authors pre-trained OmniStream using a synergistic multi-task framework. The framework couples several learning paradigms: static and temporal representation learning (understanding objects and their motion), streaming geometric reconstruction (building 3D maps in real-time), and vision-language alignment (connecting visual input with descriptive text). Such an extensive training regimen, leveraging an impressive 29 different datasets, ensures that OmniStream develops broad, robust features capable of generalizing across various visual tasks, laying the groundwork for a truly general-purpose visual understanding system.

Figure 2: Overall framework of OmniStream. Equipped with 3D-RoPE and causal spatiotemporal attention, the unified backbone is trained via a multi-task framework that couples static and temporal representation learning, streaming geometric reconstruction, and vision-language alignment.

Figure 2: Overall framework of OmniStream. Equipped with 3D-RoPE and causal spatiotemporal attention, the unified backbone is trained via a multi-task framework that couples static and temporal representation learning, streaming geometric reconstruction, and vision-language alignment.

Performance That Counts, Not Just Dominates

The paper explicitly states that OmniStream's primary objective isn't to achieve benchmark-specific dominance, but rather to demonstrate the viability of training a single, versatile vision backbone. Crucially, even with a strictly frozen backbone – meaning its core learned features are used directly without any task-specific fine-tuning – OmniStream consistently achieves competitive performance when compared to specialized expert models across a broad spectrum of benchmarks. Such "zero-shot" or "few-shot" generalization capability is a strong indicator of its potential.

Key performance highlights from their extensive evaluations include:

- Image and Video Probing: OmniStream delivers competitive results against specialized experts in understanding both static images and dynamic video content, indicating a strong foundation in general visual perception.

- Streaming Geometric Reconstruction: It excels in tasks like real-time depth estimation from video streams, demonstrating remarkable temporal coherence across long sequences. This makes it a critical capability for robust 3D mapping and Simultaneous Localization and Mapping (

SLAM) on drones. - Complex Video and Spatial Reasoning: The model shows a strong ability to understand intricate dynamic scenes and complex spatial relationships, moving beyond simple object identification to contextual understanding.

- Robotic Manipulation (unseen at training): Perhaps most impressively, OmniStream performs well on robotic manipulation tasks it was not explicitly trained on. This performance highlights its significant generalization capabilities for embodied agents like drones, suggesting it can adapt to new action-oriented challenges.

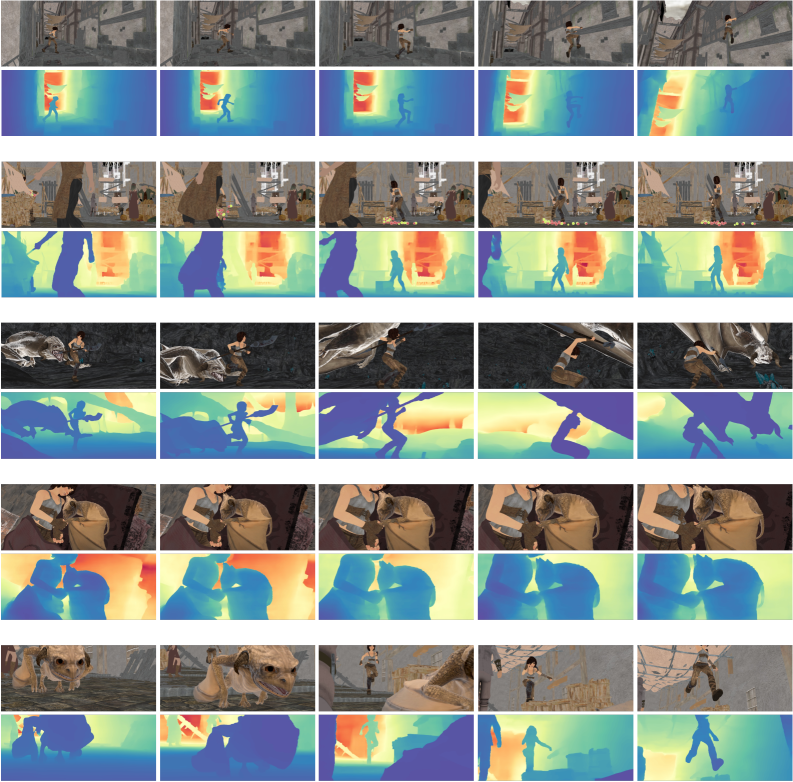

Figure 3: Qualitative results on Sintel video depth reconstruction. OmniStream maintains temporal coherence across long sequences.

Figure 3: Qualitative results on Sintel video depth reconstruction. OmniStream maintains temporal coherence across long sequences.

The qualitative results on video depth reconstruction, particularly from datasets like Sintel, visually confirm its strength. These demonstrations show remarkably smooth and accurate 3D mapping over time, which is a common failure point for fragmented systems that struggle to maintain consistency and avoid jitter between frames.

Figure 4: More qualitative results on the Sintel video depth, illustrating the model's consistent performance across various scenes.

Figure 4: More qualitative results on the Sintel video depth, illustrating the model's consistent performance across various scenes.

This consistent and versatile performance across diverse tasks without requiring task-specific fine-tuning is what truly sets OmniStream apart and makes it genuinely noteworthy for future autonomous systems.

The Real Impact on Autonomous Drones

OmniStream represents a significant leap forward for drone autonomy. For years, integrating robust perception, accurate 3D mapping (often through SLAM systems), and intelligent decision-making into a single, compact, and power-efficient drone package has been a major engineering challenge, often referred to as the "holy grail" of robotics. OmniStream brings us significantly closer to this reality.

- Unified Perception and SLAM: Instead of a drone running separate, heavy modules for visual odometry, complex mapping, and object detection, a single OmniStream backbone could potentially handle all these functions. Such consolidation means fewer computational resources, reduced power draw, and critically, much lower latency for critical tasks like collision avoidance and dynamic path planning.

- Enhanced Situational Awareness: A drone equipped with OmniStream could simultaneously understand not only what objects are in its environment but also their precise 3D location, how they are moving, and even relate this information to high-level mission objectives expressed in natural language. This capability provides a truly holistic understanding of its operational space.

- Robust Autonomous Navigation: Consider a drone navigating a complex industrial facility or a dense forest. With OmniStream, it wouldn't just avoid obstacles; it could understand their context, identify critical equipment for inspection, or distinguish between navigable paths and impassable terrain, all based on real-time visual reasoning. Its proven ability to maintain temporal coherence in 3D reconstruction means more stable, reliable, and safer autonomous navigation, even in challenging environments.

- Adaptive Mission Planning and Execution: The vision-language alignment capability is transformative. Drones could interpret abstract, high-level commands from a human operator ("inspect the damaged pipe on the third floor of sector C") and autonomously translate these into specific visual goals, recognition targets, and optimized flight paths. This opens doors for more intuitive human-drone interaction and more flexible mission execution.

- Optimized Edge AI Efficiency: The design principles behind OmniStream, particularly its real-time, frame-by-frame processing with a persistent

KV-cache, are specifically geared towards efficiency. These principles make it a strong candidate for deployment on resource-constrained drone hardware, such asNVIDIA Jetsonseries or futureQualcomm Snapdragonflight platforms, bringing advanced AI directly to the edge.

The Roadblocks Ahead

While OmniStream is a compelling step, it's essential to view it with a pragmatic lens. It's not a magic bullet, and the paper implicitly and explicitly highlights several areas for further development before widespread drone deployment:

- Actual Computational Footprint on Edge Hardware: The paper describes OmniStream as "efficient" for a unified model, but the specific computational demands (e.g., FLOPs, memory usage) on low-power drone edge hardware are not detailed. "Efficient" for a large foundation model might still be too heavy for a

Raspberry Pi-level compute board or even mid-rangeJetson Nanowithout significant model pruning, quantization, or specialized hardware acceleration. Real-world power consumption metrics are also absent. - Generalization to Truly Novel and Adversarial Environments: While trained on 29 datasets, real-world drone operations often involve highly dynamic, unstructured, and sometimes adversarial environments that may not be fully represented. Such environments include extreme weather conditions, very low light, dense foliage, or environments with

out-of-distributionobjects or scenes. Its robustness and reliability in such truly novel scenarios require further testing and validation. - Real-time Latency Benchmarks and Throughput: The paper claims "efficient, frame-by-frame online processing," which is a good start. However, specific latency numbers (e.g., milliseconds per frame) or throughput (frames per second) on various representative drone hardware platforms are not provided. For drone applications, where even milliseconds can be critical for reactive collision avoidance or maintaining stable flight, these metrics are paramount.

- Integration with Existing Drone Ecosystems: The paper focuses on the foundational AI model. How OmniStream would be seamlessly integrated with existing drone flight controllers (e.g.,

PX4,ArduPilot), various sensor fusion pipelines (e.g., IMU, GPS, LiDAR), and specific robotic manipulation platforms is left as future work. It's a powerful visual backbone, but not yet a plug-and-play solution for a fully autonomous drone system. - Robustness to Sensor Noise and Failures: Drones operate in environments prone to sensor noise, motion blur, and occasional sensor dropouts. The paper doesn't explicitly discuss OmniStream's resilience to these real-world challenges, which are common for vision-based systems on mobile platforms.

Building This Yourself? Not Yet.

For drone hobbyists and individual builders, replicating OmniStream from scratch is currently not feasible. Replicating it represents a large-scale research effort, requiring substantial computational resources for training on dozens of diverse datasets and likely specialized hardware. The backbone itself is a complex vision transformer variant, a type of deep learning model that is resource-intensive to train. However, if the authors were to release pre-trained weights and an optimized inference pipeline (perhaps with ONNX support), integrating it into a hobbyist-level drone project could become possible, especially with increasingly powerful single-board computers like the Jetson Orin Nano or even the Raspberry Pi 5 with its neural network acceleration capabilities. As of now, the paper doesn't mention open-sourcing the code or weights, but that's a common and welcome next step for such foundational research. For the immediate future, this work is more about understanding the direction of advanced AI that will eventually trickle down into commercial drone platforms, and later, potentially into more accessible hobbyist projects.

Broader Horizons: Related Innovations

OmniStream's success is deeply intertwined with advancements in efficient video processing and robust reasoning, areas where other recent papers are also making strides. For instance, "Attend Before Attention: Efficient and Scalable Video Understanding via Autoregressive Gazing" by Shi et al. introduces AutoGaze, a method for intelligently focusing attention in long videos. This technique could directly enhance OmniStream's perception component, making it even more computationally efficient on resource-constrained drone hardware, especially when dealing with high-resolution, extended video streams. Similarly, "EVATok: Adaptive Length Video Tokenization for Efficient Visual Autoregressive Generation" by Xiong et al. provides methods for adaptive video tokenization. Optimizing how raw video pixels are compressed into discrete tokens is a critical underlying technology that directly impacts the computational cost and reconstruction quality, a delicate balance OmniStream needs to strike for real-time operation on edge devices. Furthermore, the intelligent decision-making aspect required for OmniStream's "Act" capabilities could significantly benefit from robust reasoning frameworks. "MM-CondChain: A Programmatically Verified Benchmark for Visually Grounded Deep Compositional Reasoning" by Shen et al. focuses on verifiable complex reasoning, which is essential for ensuring reliable and safe autonomous actions in dynamic and unpredictable drone environments. Finally, to manage the varying power and compute budgets often found on drone platforms, the concept of "One Model, Many Budgets: Elastic Latent Interfaces for Diffusion Transformers" by Haji-Ali et al. offers intriguing pathways. This innovation could allow OmniStream to dynamically adapt its computational load, enabling principled latency-quality trade-offs based on available power and mission requirements.

OmniStream represents a significant stride towards truly unified, real-time AI for autonomous systems. While not a ready-to-fly solution today, it provides a compelling vision for future drone intelligence, where perception, 3D mapping, and intelligent action are no longer disparate modules but integrated facets of a single, coherent cognitive system. Keep a close eye on this space; the capabilities demonstrated here will fundamentally reshape what our drones can achieve, moving beyond simple automation to genuine autonomy.

Paper Details

Title: OmniStream: Mastering Perception, Reconstruction and Action in Continuous Streams Authors: Yibin Yan, Jilan Xu, Shangzhe Di, Haoning Wu, Weidi Xie Published: March 2026 arXiv: 2603.12265 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.