How Humanoid Recovery Tactics Could Help Drones Survive Crashes

Integrating balance metrics into reinforcement learning could enable drones to recover autonomously from crashes, inspired by humanoid robots.

TL;DR: A recent study integrates classical balance metrics into reinforcement learning systems for humanoid robots, achieving a 93.4% recovery success rate. This approach could inspire drones to autonomously recover from crashes, enhancing unmanned aerial navigation.

Can Drones Learn to Recover Like Humans?

Picture this: a drone crashes into a tree, its rotors spinning helplessly as it lies on the ground. Crash recovery remains a major challenge in drone technology. But what if drones could borrow recovery tactics from humanoid robots? A recent study demonstrates how reinforcement learning (RL) combined with balance control principles enables humanoid robots to recover from falls with remarkable precision. Could this pave the way for drones to autonomously recover from collisions?

Why Drones Struggle with Crash Recovery

Drones, much like humanoid robots, face significant challenges when operating in unpredictable environments. A collision—whether caused by wind, obstacles, or user error—often leaves drones incapacitated. Current solutions, such as crash cages or parachutes, are bulky and costly, while manual intervention remains the norm. Unlike humans or advanced humanoid robots, drones lack the ability to autonomously recover after a crash.

Humanoid robots have recently made strides in recovery using RL, but these systems often treat recovery as a black-box optimization problem, ignoring fundamental balance principles. For drones, which operate in even more dynamic environments, incorporating balance-centric strategies could be a game-changer.

Learning from Humanoids: Embedding Balance Metrics

The study proposes a novel RL-based approach that embeds classical balance control metrics into the recovery process. Key metrics include:

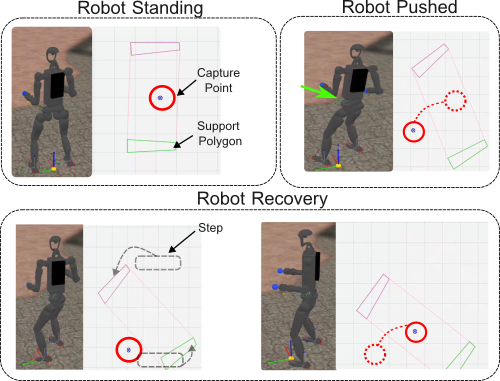

- Capture Point: The point where balance can be restored.

- Center-of-Mass (CoM): The robot’s mass distribution.

- Centroidal Momentum: The momentum around the robot’s center.

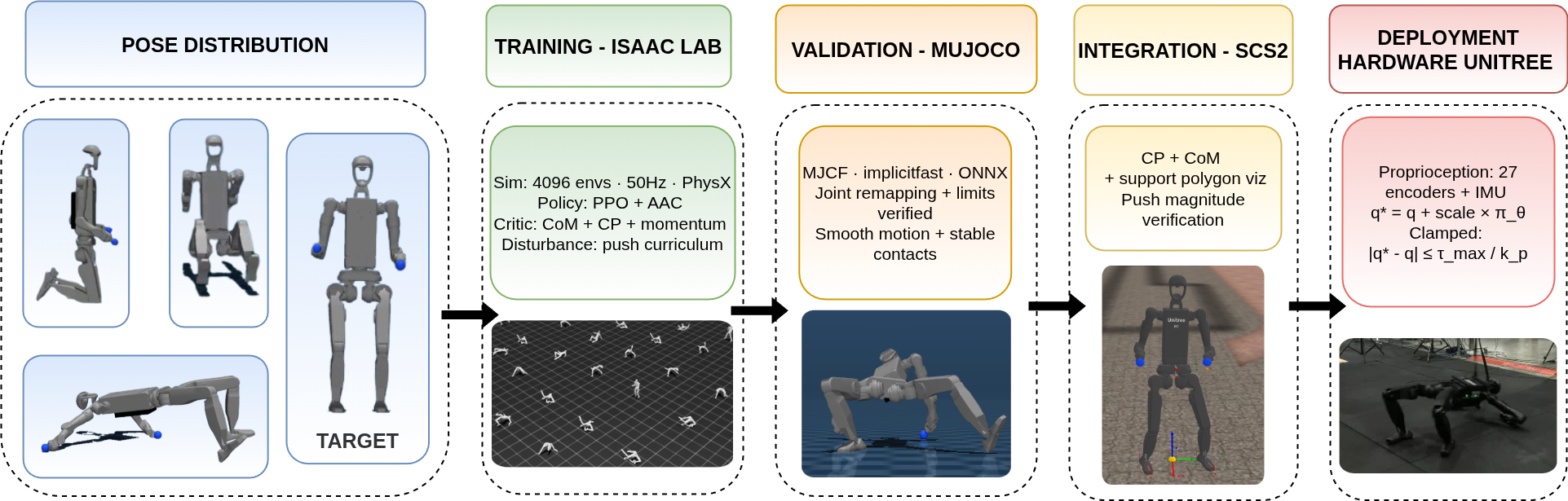

During training, the RL system uses these metrics to shape learning rewards, ensuring recovery strategies are grounded in physical principles rather than random trial-and-error. The actor network relies solely on proprioceptive inputs (internal sensors) for real-world execution, while the privileged critic processes balance metrics during training.

This approach enables the robot to learn a range of recovery behaviors, from minor balance corrections to standing up after extreme falls, without relying on pre-scripted actions.

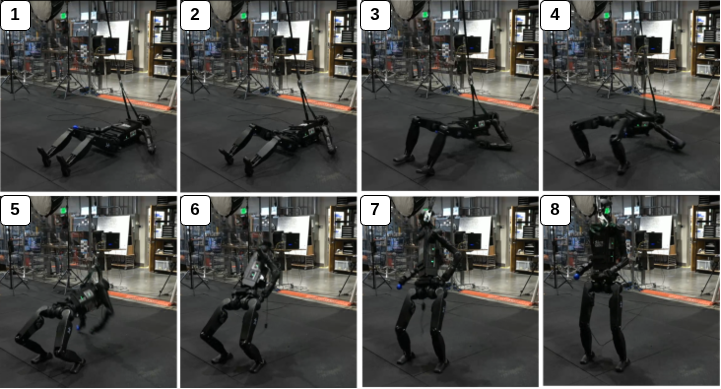

Caption: Stand-up sequence on the Unitree H1-2 hardware. Frames 1–8 show recovery from a fallen configuration (1) to upright stance (8).

Caption: End-to-end pipeline for training the stand-up controller on the Unitree H1-2 robot.

Results: 93.4% Recovery Success

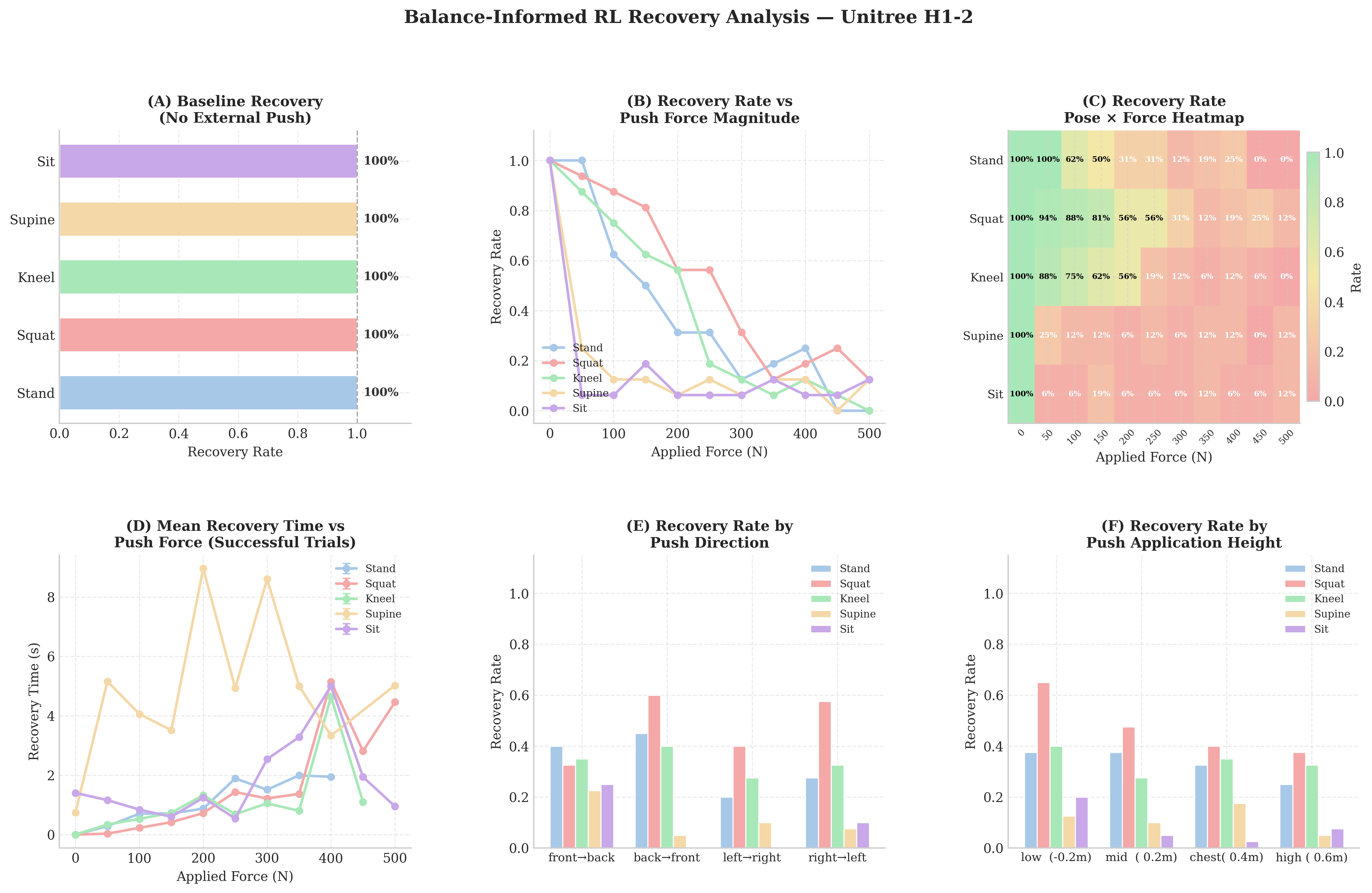

The study’s results are impressive. Tests on the Unitree H1-2 robot revealed:

- 93.4% recovery success rate across varying initial poses and fall configurations.

- Smooth transitions through balance responses, from minor corrections to complex recovery maneuvers.

- Generalization to new environments via sim-to-sim transfer, validated in

MuJoCosimulations and preliminary hardware tests.

Key performance metrics include:

- Capture Point Recovery: Successful re-centering of balance indicators.

- Recovery Envelope: Effective responses to forces up to 500 N.

- Time to Recover: Predictable recovery times based on perturbation magnitude.

Caption: Capture-point evolution during push recovery.

Caption: MuJoCo analysis of recovery rates and times in diverse scenarios.

Why This Matters for Drones

For drones, this research offers exciting possibilities:

- Autonomous Crash Recovery: Embedding balance-informed RL policies could enable drones to stabilize and regain flight after collisions.

- Improved Navigation: Metrics like CoM tracking could enhance stability during wind gusts or sudden disturbances.

- Lightweight Solutions: Software-based recovery eliminates the need for bulky crash-proofing gear.

- Extended Mission Durability: Autonomous recovery reduces downtime and human intervention, making drones more reliable for remote operations.

These advancements could lead to self-righting drones capable of handling complex missions, such as search-and-rescue, delivery, or infrastructure inspection.

Limitations of the Study

While promising, this research has notable limitations:

- Hardware Constraints: The method was tested on a relatively simple humanoid platform (Unitree H1-2). Adapting it to drones, which have more complex dynamics, will require significant modifications.

- Simulation vs. Reality: Results in simulation are promising, but hardware tests remain preliminary. Full-scale deployment on drones is untested.

- Proprioception Reliance: The RL policy relies solely on internal sensors for execution. Drones may need external sensing, such as cameras or IMUs, to replicate this approach.

- Energy Efficiency: Recovery maneuvers can be energy-intensive, which may limit their practicality for long-duration drone missions.

Can Hobbyists Try This?

For engineers and hobbyists interested in experimenting:

- Hardware: A drone platform with high-fidelity sensors and sufficient processing power (e.g.,

NVIDIA Jetson) is essential. - Software: Training requires access to physics simulators like

Isaac GymorMuJoCoand RL frameworks such asPPOorPyTorch RLlib. - Challenges: Without access to drone-specific balance metrics, replicating this method will require significant customization.

Related Work

Other research that complements this study includes:

- Keypoint Detection for Recovery: Techniques for identifying critical balance points.

- Vision-Based Models: Using visual data for dynamic recovery strategies.

Paper Details

- Original Paper: Embedding Classical Balance Control Principles in Reinforcement Learning for Humanoid Recovery

- Related Papers: ER-Pose: Rethinking Keypoint-Driven Representation Learning for Real-Time Human Pose Estimation, FOMO-3D: Using Vision Foundation Models for Long-Tailed 3D Object Detection

- Figures Available: 5

Written by

The Flight DeskSharing knowledge about drones and aerial technology.